글로벌 연구동향

의학물리학

- [Med Phys.] A streak artifact reduction algorithm in sparse-view CT using a self-supervised neural representation자기주도 신경 알고리즘을 이용한 CT 영상의 아티펙트 제거 알고리즘 개발 연구

연세대 / 김병준, 심현정*, 백종덕*

- 출처

- Med Phys.

- 등재일

- 2022 Dec

- 저널이슈번호

- 49(12):7497-7515. doi: 10.1002/mp.15885. Epub 2022 Aug 8.

- 내용

-

Abstract

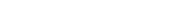

Purpose: Sparse-view computed tomography (CT) has been attracting attention for its reduced radiation dose and scanning time. However, analytical image reconstruction methods suffer from streak artifacts due to insufficient projection views. Recently, various deep learning-based methods have been developed to solve this ill-posed inverse problem. Despite their promising results, they are easily overfitted to the training data, showing limited generalizability to unseen systems and patients. In this work, we propose a novel streak artifact reduction algorithm that provides a system- and patient-specific solution.Methods: Motivated by the fact that streak artifacts are deterministic errors, we regenerate the same artifacts from a prior CT image under the same system geometry. This prior image need not be perfect but should contain patient-specific information and be consistent with full-view projection data for accurate regeneration of the artifacts. To this end, we use a coordinate-based neural representation that often causes image blur but can greatly suppress the streak artifacts while having multiview consistency. By employing techniques in neural radiance fields originally proposed for scene representations, the neural representation is optimized to the measured sparse-view projection data via self-supervised learning. Then, we subtract the regenerated artifacts from the analytically reconstructed original image to obtain the final corrected image.

Results: To validate the proposed method, we used simulated data of extended cardiac-torso phantoms and the 2016 NIH-AAPM-Mayo Clinic Low-Dose CT Grand Challenge and experimental data of physical pediatric and head phantoms. The performance of the proposed method was compared with a total variation-based iterative reconstruction method, naive application of the neural representation, and a convolutional neural network-based method. In visual inspection, it was observed that the small anatomical features were best preserved by the proposed method. The proposed method also achieved the best scores in the visual information fidelity, modulation transfer function, and lung nodule segmentation.

Conclusions: The results on both simulated and experimental data suggest that the proposed method can effectively reduce the streak artifacts while preserving small anatomical structures that are easily blurred or replaced with misleading features by the existing methods. Since the proposed method does not require any additional training datasets, it would be useful in clinical practice where the large datasets cannot be collected.

제안한 방법에 대한 전반적인 개요도입니다. CT 시스템을 반영한 자기지도학습 방법으로 주어진 희박뷰 촬영 데이터에 신경 표현을 최적화합니다. 희박뷰에 의한 영상왜곡은 노이즈와 같이 무작위가 아니라 주어진 시스템과 환자에 의해 결정된다는 점에 착안하여, 최적화된 신경 표현을 사전 영상으로 활용하고 시뮬레이션을 통해 영상왜곡을 재생성합니다. 재생성한 영상왜곡을 원본 영상에서 제거하여 개선된 최종 영상을 출력합니다.

Affiliation

Byeongjoon Kim 1, Hyunjung Shim 1, Jongduk Baek 1

1School of Integrated Technology, Yonsei University, Incheon, South Korea.

- 키워드

- computed tomography (CT); inverse problem; neural representation; sparse-view CT; streak artifact.

- 연구소개

- CT 촬영 시 조사되는 방사선량을 줄이거나 촬영 속도를 높이기 위해, 또는 특정 CT 시스템의 구조적인 이유로 희박뷰 샘플링(sparse-view sampling) 기법이 사용됩니다. 그러나 이 경우 불충분한 데이터로 인해 복원영상에 줄무늬 모양 왜곡(streak artifact)가 발생하는데, 본 논문은 이러한 왜곡을 자기지도 학습(self-supervised learning) 기반의 신경 표현(neural representation)을 통해 효과적으로 저감할 수 있음을 보입니다. 특히 제안한 방법은 하나의 촬영 데이터만으로 스스로 학습하여, 다수의 학습 데이터를 사용한 영상기반의 딥러닝(deep learning) 방법보다 뛰어난 영상 화질 및 일반화 성능을 확보할 수 있음을 보여줍니다. 제안한 방향으로 많은 연구가 이루어져 딥러닝 기술의 일반화 오류(generalization error) 문제를 효과적으로 해결하고 개별 환자에 최적화된 고화질 CT 영상복원이 가능할 수 있기를 기대합니다.

- 덧글달기